How Knowledge Systems Self-Seal

Drivers of Expertise Erosion Across Research, NGOs, Policy, and Advisory Work

This piece is inspired by Alexander Kustov’s article “Public Engagement Is Good for Your Research” and by my own experience working inside knowledge-driven organisations, where research, expertise, and real-world practice constantly intersect.

“Every profession is a conspiracy against the laity.”

— George Bernard Shaw

Working in an expert organisation - one that is involved in both research and real field practice - attending conferences, reading reports, and talking to colleagues, I often find myself in a loop of cross-validation and professional biases that reinforce each other.

The development of any expertise (from research to practice) accumulates not only knowledge, but also “isotopes” of bias - stable residues of experience, feedback, and professional frameworks that over time settle into the way we think and operate. With constant exposure (and without mechanisms to remove them), they accumulate and begin to change the structure of the system itself, triggering an erosion of expertise.

The way we design research or expertise, interpret data, write reports, and make decisions across academia, think tanks, NGOs, the public sector, and advisory work — all of it carries the imprint of accumulated biases and biased practices.

In research, it shows up in how we frame questions and interpret results. In consulting, in how we structure problems and recommend solutions. In NGOs and policy work, in how we define what matters and what gets ignored.

Today, we’ll set aside the broader conversation about critical thinking (and even expert ethics) and focus instead on systemic causes.

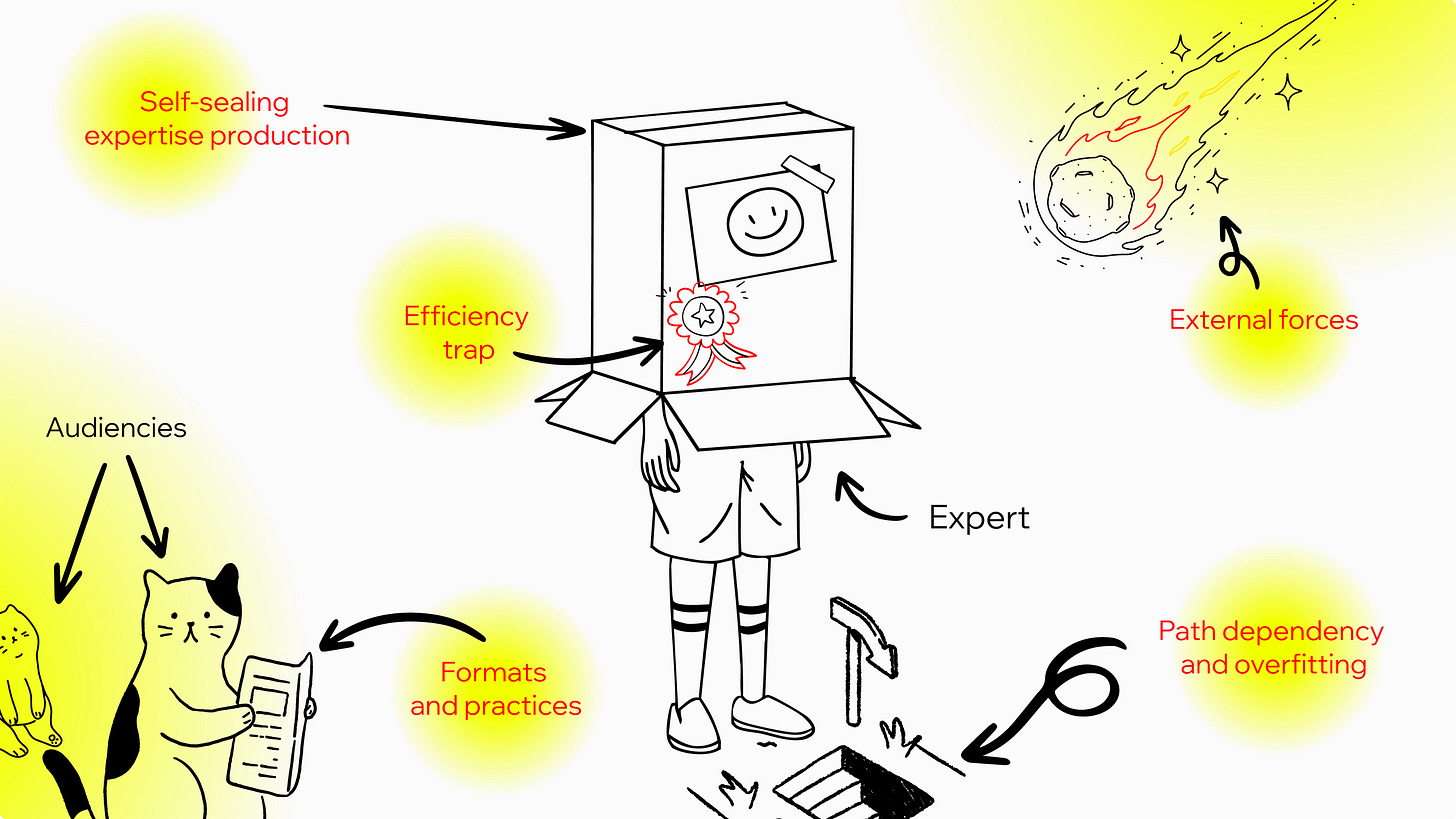

Six Drivers of Expertise Erosion

1. Self-sealing expertise production

The process of producing new knowledge and expertise often seems to rest on a basic assumption: the producer of knowledge (the researcher, expert, consultant) already operates at a level above the surrounding reality. Above the client, the audience, and the field being studied. After all, that’s the reason I am the expert, the one entrusted to carry out this important task: research, analysis, the search for truth.

At the end of the day, I’m the expert.

From the top of this ivory tower (some higher, some lower), we - consciously or not - tend to look at what’s in front of us from above and are often busy keeping outsiders away from both the process and its results.

How many scientific or expert conferences do you know that are designed not only for professional communities and stakeholders, but also for a broader audience?

How many researchers are willing and actually do engage in public talks, presentations, and dialogue beyond academic or expert circles, intentionally investing in engaging communities or the general public?

This approach is exactly what locks us into a loop of validation, making us forget that:

“People outside the university often see things that people inside it miss, not because they are smarter, but because they are working from a different set of assumptions.” — Alexander Kustov, Public Engagement Is Good for Your Research

This statement applies far beyond academia - to experts, consultants, public service managers, policy practitioners… pretty much anywhere.

2. External forces

In this section, I bring together the forces and constraints that surround an expert, a researcher, or any knowledge factory and systematically shape the kinds of knowledge produced.

This is not about direct control (although that exists, too). It’s about something more subtle:

which types of knowledge are rewarded and which are not.

Career incentives

If we try to formulate this seemingly obvious factor more clearly, it would sound something like this: in the knowledge and expertise industries — perhaps more than anywhere else (with the possible exception of politics) — alignment with policies and strategies, established norms and traditions, and overall value fit often has a stronger impact on careers than quality or actual impact.

Promotion, publication, and recognition are not distributed in a vacuum. They pass through a filter: alignment with dominant frames.

As a result, careers become dependent on reproducing the existing frame rather than expanding it. And the knowledge system starts chasing its own tail, reproducing itself and slowing down renewal.

Funding and market logic

Accepted and recognisable narratives (along with expected outcomes ) are often a more reliable way to secure funding or clients than unconventional approaches, new questions, or non-standard solutions.

This applies both to grant funding (thematic open calls, funders with their own agendas) and to commercial expert and research work, where clients and the market begin to shape the “rules”, the “topics”, and sometimes even the expected conclusions.

As a result, the scope becomes constrained by what is considered fundable, acceptable, and legible.

Closed stakeholder loops

In many fields, our decisions, interpretations, and even the agenda itself are shaped within a relatively small circle of actors: funders, policy-makers, leading experts, institutions, and boards.

They are the ones who define the questions, validate the answers, and translate results into decisions.

Rarely are communities or the general public invited to this table. And when they are, it is often as sources of information to be used, not as participants in the process.

The system of knowledge and expertise production is not just influenced by stakeholders; it is defined by a relatively closed circle of them. And new knowledge is most often validated by this same circle, rather than by reality itself.

As a result, knowledge production is not pushed outward, but pulled back into familiar patterns.

3. Efficiency trap of expertise

Expertise is often perceived as the result of successful experience. Once we have proven (at least to ourselves) the validity and value of our work, our research, or our solutions, we begin to lower the level of challenge we apply to ourselves, to our methods, to our own expertise.

We return to the same approaches, assumptions, and solutions that once worked, repeating them again and again, while the world around us keeps changing.

What once made us fast, effective, and “right” can, with each repetition, become less relevant, less connected to reality, and increasingly tied to our own internal frame of reference.

Each cycle without reflection, without challenge, without adjustment moves us further in the same direction — toward gradual blindness, and toward expertise becoming sealed within the biases created by its own past success.

4. Path dependency and overfitting

The efficiency trap is reinforced by another systemic factor: dependence on the past. Past research, the expertise we’ve applied, and the solutions that once worked all shape the models we use today. They influence how we define problems, what questions we ask, and what we consider worth investigating.

As a result, new knowledge is often produced in a way that confirms what we already know or believe we know.

This is where the idea of overfitting becomes useful.

In machine learning, overfitting describes a situation when a forecaster tries to make their prediction model (or hypothesis) perfectly match past data (Philip Tetlock), and it becomes less useful for understanding the future.

The same dynamic appears in research, consulting, and policymaking. Instead of evidence challenging our assumptions, evidence increasingly confirms what we are already invested in believing.

And the further we move along this path, the easier it becomes to prove ourselves right rather than to question whether we are.

5. Formats and practices shape knowledge

Let’s look at what a typical “output” actually looks like in practice: • a publication • a PDF report on a website • a presentation at a professional conference

If you have the time, energy, or support from a communications team, you might go a bit further: • a few interviews or expert comments in professional media • a blog post that turns into social media content

And if you really push it, maybe a public event.

Familiar formats don’t just limit engagement and impact; they predefine what can be said and how: the same structures, the same types of conclusions, the same ways of framing and phrasing. The same approach reproduces itself within established infrastructural boundaries.

Why does this remain unchanged? Because “this is how it’s done.” Because these formats are funded. Because they are legible to us — the expert community. They are easier to approve, easier to sell.

We produce knowledge and package it into formats that are not neutral. Over time, these formats become a framework in themselves, defining what can appear at all and keeping us within a familiar comfort zone.

Conclusion and What Comes Next

Expertise erosion happens through the reproduction of the same patterns and formats and through the absence of infrastructure for renewal, reflection, and intentional engagement.

Yes, we can point to critical thinking, ethics, the ambition to be more open-minded, maybe even write a few more blog posts for your organisation’s Facebook page.

But what interests me is something else: what structures, practices, and formats do we need so that knowledge stays alive, keeps evolving, and actually reaches the place it was meant for. The real world.

More on this — in next week’s piece.

Related articles ↓

Interesting. You may have published the next piece so I shall go and read!

I think we can create opportunities for new knowledge and co-construct new meaning through participative interventions …and by moving away from the “expert” label, embracing the “not knowing” and allowing the space for it.

I had a short stint in academia as an assistant. I ran away and never looked back.

It is its own world - not a place for everyone.